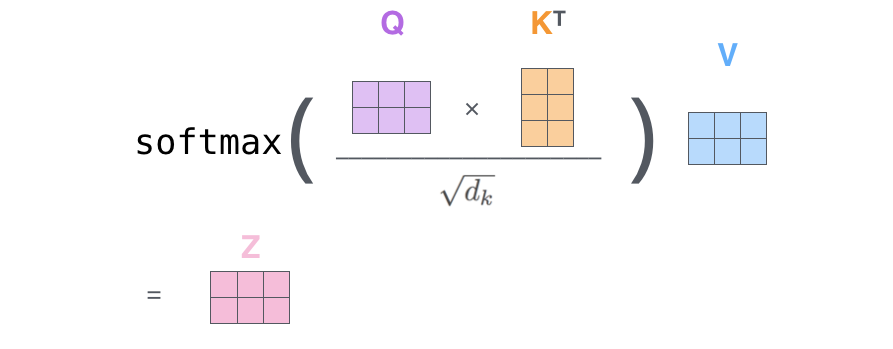

Self-attention is the core idea behind transformers.Continue reading on Medium » Read More Python on Medium

#python

IT, Techno, Auto, Bike, Money

Self-attention is the core idea behind transformers.Continue reading on Medium » Read More Python on Medium

#python